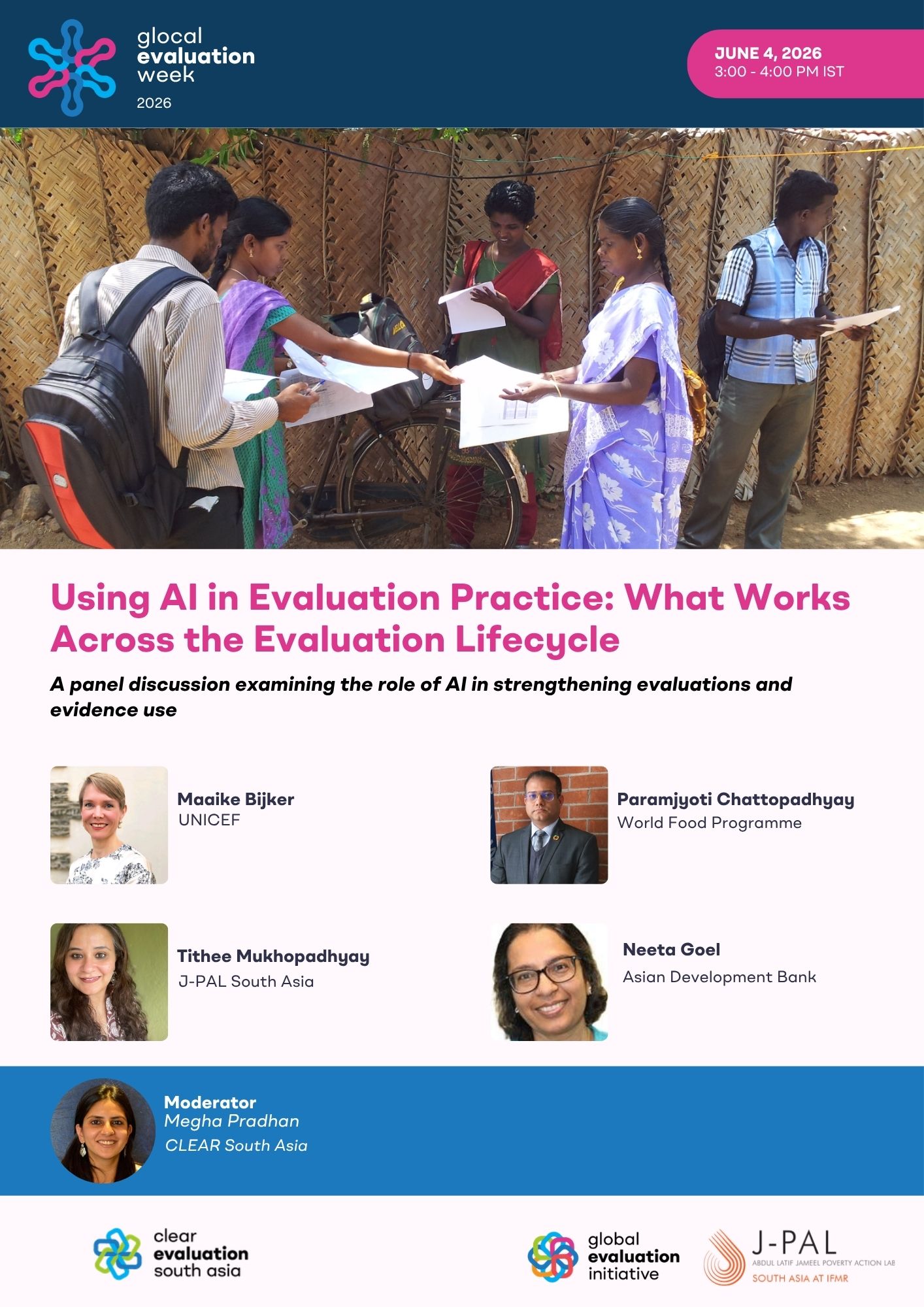

Using AI in Evaluation Practice: What Works Across the Evaluation Lifecycle

Painel | Online

-

Organized by:

CLEAR South Asia

About the Event

Artificial intelligence is becoming the newest addition to the evaluator’s toolkit. As it takes on everyday evaluation tasks such as data processing, analysis, and reporting, it is changing the nature of evaluation practice and evidence use. In doing so, AI is placing evaluations at a crucial crossroads between using these tools to strengthen rigor, or adopting them in ways that prioritise speed but leave much of the clarity and credibility wanting.

This session, moderated by J-PAL South Asia, will examine how artificial intelligence is being applied across different stages of the lifecycle of evaluation, from instrument design and data collection to analysis and reporting. Alongside current applications, the discussion will focus on practical considerations for using these emerging technologies responsibly, including how to keep the evaluator in the loop, how outputs are reviewed and validated, and how AI is used alongside established methods to ensure meaningful impact.

The session will feature practitioners and technical experts from multilateral organisations, along with implementing partners who have applied these tools in practice. Through these examples, participants will engage with where AI has added value, and where it has raised challenges, especially in verifying outputs and maintaining confidence in evaluation findings. The discussion will aim to move beyond adoption of AI, towards clearer choices on where AI should be relied upon, where it should be treated with caution, and how its use can strengthen, rather than blur, the foundations of credible evaluation.

This session, moderated by J-PAL South Asia, will examine how artificial intelligence is being applied across different stages of the lifecycle of evaluation, from instrument design and data collection to analysis and reporting. Alongside current applications, the discussion will focus on practical considerations for using these emerging technologies responsibly, including how to keep the evaluator in the loop, how outputs are reviewed and validated, and how AI is used alongside established methods to ensure meaningful impact.

The session will feature practitioners and technical experts from multilateral organisations, along with implementing partners who have applied these tools in practice. Through these examples, participants will engage with where AI has added value, and where it has raised challenges, especially in verifying outputs and maintaining confidence in evaluation findings. The discussion will aim to move beyond adoption of AI, towards clearer choices on where AI should be relied upon, where it should be treated with caution, and how its use can strengthen, rather than blur, the foundations of credible evaluation.

Speakers

| Nome | Título | Biography |

|---|---|---|

| Neeta Goel | Senior Evaluation Specialist, Independent Evaluation Department, Asian Development Bank | |

| Maaike Bijker | Chief of Evidence (Data, Research, and Evaluation), UNICEF India | |

| Paramjyoti Chattopadhyay | Head of Research, Assessment, Monitoring (RAM) and Evaluation, World Food Programme India | |

| Tithee Mukhopadhyay | Deputy Executive Director, J-PAL South Asia |

Moderators

| Nome | Título | Biography |

|---|---|---|

| Megha Pradhan | Director, CLEAR South Asia |